Flaky Test Cases: Use Cases & Guide

This article provides all the information you need to understand flaky tests, including use cases, as well as tips on how to get rid of them.

In the world of software development, tests are an integral part of ensuring the functionality and reliability of a system. However, not all tests are created equal. Some of them, known as "flaky tests," can be particularly challenging.

A flaky test is a test that exhibits both a passing and a failing result with the same code. It is unpredictable, non-deterministic, and can vary between subsequent runs, even if there have been no changes to the code. These tests lack consistency and can pass or fail randomly, causing confusion and frustration among developers.

Flaky tests are not only annoying but also problematic. They undermine the trust in automation, consume valuable developer time, and can lead to the release of buggy software if not addressed properly. They are a common phenomenon in both small-scale and large-scale software projects.

When Do We Encounter Flaky Tests?

Flaky tests can creep into your testing suite at any point and are often encountered in the following scenarios:

🕦 Timing Issues

If a test assumes certain operations will happen in a particular order or within a specific timeframe, it might fail when those assumptions aren't met.

🚦 Concurrency Issues

Tests may behave unpredictably when multiple threads or processes are involved.

👯 Test order dependencies

If a test depends on the state left by previous tests, it can become flaky.

🏢 External System Dependencies

Tests depending on an external system or service can become flaky if that system experiences issues.

🤯 Randomness in Input or Behavior

If the test uses randomly generated input or depends on components with randomized behavior, it may be flaky.

Flaky Test Cases

To fully comprehend the complex nature of flaky tests, it is vital to examine some specific scenarios developers often encounter. Each example showcases how different situations can lead to flakiness and ambiguity in test results.

1️⃣ Asynchronous Programming Test

In JavaScript and other languages that support asynchronous programming, flaky tests can often occur due to the non-linear execution of code.

For instance, a developer might write a test for a function that includes a setTimeout operation or an API call, and the test checks for a result immediately after the call.

However, the asynchronous operation might not be complete before the test runs the check, leading to a failure. The same test could pass when the asynchronous operation is complete before the check.

This lack of predictability is the hallmark of a flaky test. It is vital to properly handle asynchronous operations in tests, using mechanisms like async/await in JavaScript or similar constructs in other languages.

2️⃣ Database Test

In an environment where multiple tests run in parallel, flaky tests can often crop up.

Consider a scenario where Test A reads from a database expecting certain data, while Test B, running concurrently, modifies the same data in the database. If Test B changes the data before Test A reads it, Test A could fail, leading to a flaky test case.

A well-designed testing setup would prevent such concurrent modification, ensuring that tests do not interfere with each other.

3️⃣ UI Tests

User interface (UI) tests, particularly in the context of web development, are notorious for being flaky.

A common problem is dealing with operations that take variable amounts of time. For example, a test might try to click a button as soon as a page loads. However, if the button takes longer to load than expected or is delayed by a slow network request, the test could fail. But on a subsequent run, if the button loads in time, the test could pass.

UI tests must be written with these timing issues in mind, often by using "wait" operations or other techniques to ensure that elements are loaded before the test interacts with them.

4️⃣ Randomness in Tests

Sometimes, tests may incorporate randomness, such as random input data or random order of execution. While this can be useful in certain testing strategies, it can also lead to flaky tests.

For instance, a test could pass or fail based on the random data it receives. Or, tests that depend on the order in which they are run could yield inconsistent results if that order is randomized.

It's important to understand and manage the potential implications of randomness in your tests.

5️⃣ External System Dependencies

Tests that rely on external systems, such as web services, APIs, or hardware, can become flaky if these systems are not consistently available or behave unpredictably.

For example, a test that depends on an API might fail if the API goes down temporarily. But if the test is rerun later when the API is available again, it might pass. Mocking or stubbing these external dependencies in tests can make them more reliable and less flaky.

Recognizing these typical cases of flaky tests can be beneficial in preventing them and in diagnosing and fixing them when they do occur. Identifying the potential sources of flakiness is the first step to tackling them effectively.

Advice to Get Rid of Flaky Tests ⬇️

Flaky tests are a nuisance, but there are strategies to minimize their impact and ideally eliminate them from your test suite:

- Isolate Tests: Each test should be independent of others and capable of being run in any order. It shouldn't rely on the state left by a previous test.

- Avoid Timing Dependencies: Whenever possible, try to avoid writing tests that depend on precise timing. Use callbacks, promises, or other means to ensure operations complete before moving forward in the test.

- Mock External Services: Instead of relying on actual external services or systems, mock them. This makes your tests faster, more reliable, and less flaky.

- Make Tests Deterministic: Avoid randomness in your tests. They should produce the same results given the same initial conditions.

- Re-run Flaky Tests Automatically: This can help avoid false negatives, but be careful not to use it as a crutch to ignore flaky tests.

Remember, the key to effective testing is trust. If you can't trust your tests due to flakiness, they lose much of their value. So it's important to pay attention to test design and address flakiness head-on when it occurs.

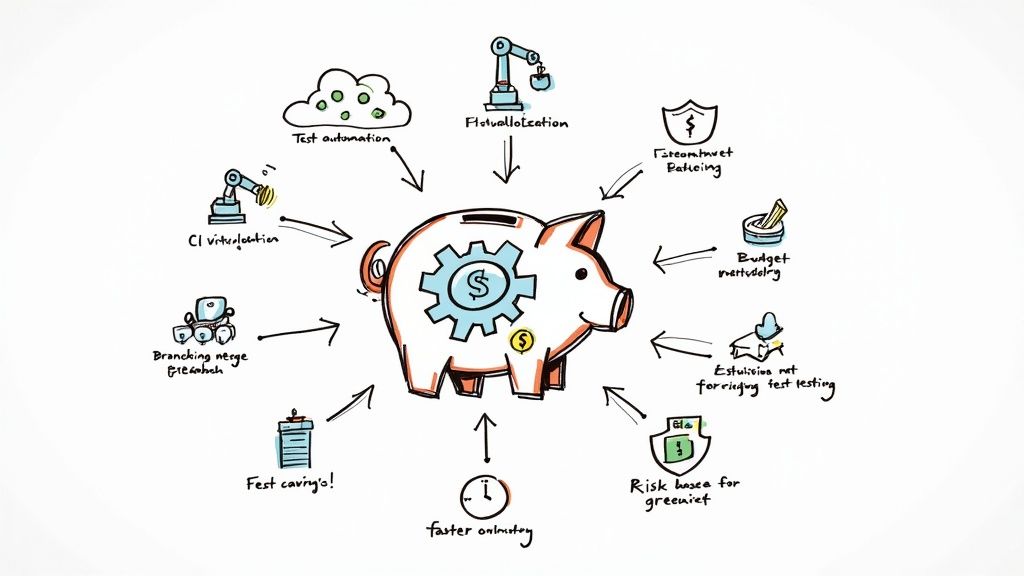

Mergify Flakyguard Product

Flakyguard utilizes artificial intelligence to identify and help you rectify flaky tests within your software, compatible with any framework. Our team has been working on it for months, and it's about to change how you work as a developer.